The quote “Data is the new oil” was introduced by British mathematician Clive Humby in 2006.

Today, modern GPUs act as the refineries for this oil, and data-driven AI systems are the products.

Just as gasoline powers machines for physical labor, AI now powers many forms of mental labor.

AI boosts our productivity, enabling us to create at far lower cost than before the AI revolution (Jim Cramer). This cognitive force, flowing across the World Wide Web, drives systems that are reshaping our world much as electricity once did, and it is becoming a central engine of economic growth (Greg Brockman).

As AI becomes more central to the economy, it also changes who holds power in society.

Agrarian societies produced an aristocracy in which those who owned the land held power.

The Industrial Revolution brought the bourgeoisie to the forefront, as those who owned machines controlled production.

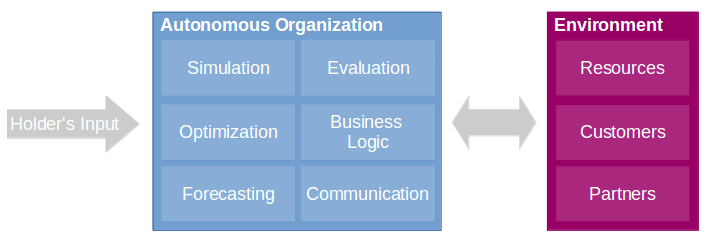

Now, the rise of data centers introduces a new form of technocracy.

Those who own and manage data flows gain growing influence over society (Richard David Precht).

This rising influence is widely recognized, not only by philosophers but also by world leaders.

Putin once remarked, “Whoever takes the lead in AI will rule the world.”

Countries and companies that control these data flows shape how we think and work.

Meta has become the world’s best scalable sociologist, and OpenAI the world’s best scalable psychologist.

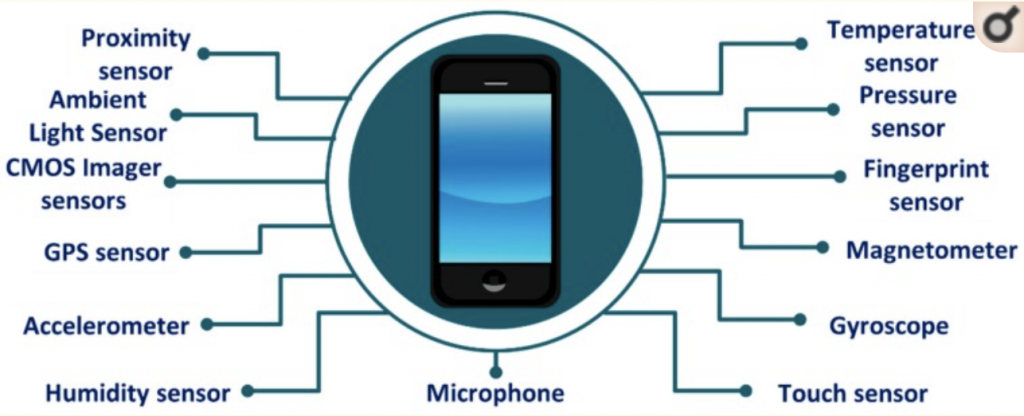

AI is learning more and more about human societies.

Because of AI’s growing power, every country is caught in an AI arms race where bombing each other’s data centers could become a real threat (Eliezer Yudkowsky).

What used to be the main bottleneck, chip supply (see older post), is no longer the biggest concern.

Industry leaders and experts now argue that energy has become an even greater challenge (Satya Nadella).

At the same time, ethical debates and regulations slow the construction of new power plants and AI infrastructure (Alex Karp).

Without this infrastructure, even the best researchers and engineers cannot compete.

Therefore, the idea of simply “building more data centers at home” breaks down at some point in every country.

At scale, countries inevitably run into physical and political limits: land becomes scarce, energy prices rise, local communities protest, and disputes emerge over land use for high-density AI infrastructure.

The only viable escape from Earth’s physical and political limits is to move AI computation into space (Elon Musk).

In orbit, the economics shift dramatically.

Cooling becomes far simpler because heat can be radiated directly into space, eliminating the need for the massive cooling systems that dominate terrestrial supercomputers. A typical two-tonne rack on Earth devotes 1.9 tonnes of its weight to cooling and supporting equipment rather than to computation itself.

In space, much of this burden disappears (Jensen Huang).

Energy, which is a bottleneck on the ground, also becomes far less constrained.

Solar panels in sun-synchronous orbits generate electricity continuously without weather interruptions, land competition, or community opposition. And because space itself imposes no meaningful limits on surface area, power generation, Earth-AI data center communication, or compute infrastructure, they can scale far beyond what is feasible on Earth.

Together, these factors make AI in space more cost-effective than building ever more data centers on the ground (Elon Musk).

Therefore, one of the ultimate constraints in the AI arms race is the ability to reach space cost-effectively and transport the necessary compute infrastructure into orbit.

Letting the AI arms race and the new space race unite in one big one.

Countries that fall behind will not only lose ground in technology but will also become dependent on those who lead, forced to follow their rules, accept their control, and adopt their ethics.

So:

Drill, baby, drill! 😉